Spatially Varying Nanophotonic Neural Networks

Abstract

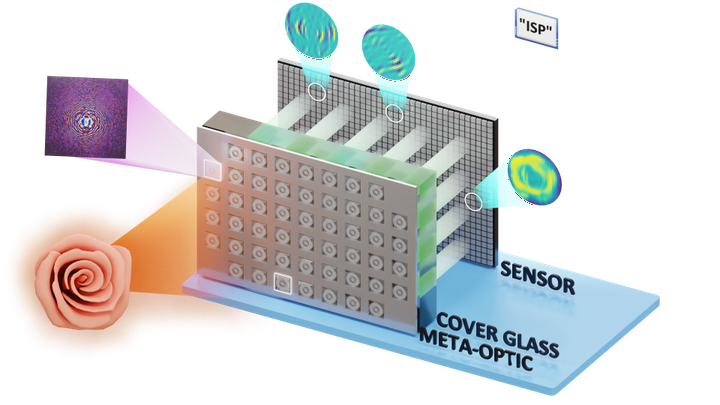

The explosive growth of computation and energy cost of artificial intelligence has spurred strong interests in new computing modalities as potential alternatives to conventional electronic processors. Photonic processors that execute operations using photons instead of electrons, have promised to enable optical neural networks with ultra-low latency and power consumption. However, existing optical neural networks, limited by the underlying network designs, have achieved image recognition accuracy far below that of state-of-the-art electronic neural networks. In this work, we close this gap by embedding massively parallelized optical computation into flat camera optics that perform neural network computation during the capture, before recording an image on the sensor. Specifically, we harness large kernels and propose a large-kernel spatially-varying convolutional neural network learned via low-dimensional reparameterization techniques. We experimentally instantiate the network with a flat meta-optical system that encompasses an array of nanophotonic structures designed to induce angle-dependent responses. Combined with an extremely lightweight electronic backend with approximately 2K parameters we demonstrate a reconfigurable nanophotonic neural network reaches 72.76% blind test classification accuracy on CIFAR-10 dataset, and, as such, the first time, an optical neural network outperforms the first modern digital neural network – AlexNet (72.64%) with 57M parameters, bringing optical neural network into modern deep learning era.